Process Instrumentation: Oil & Gas Basics

Process Instrumentation

What Is Process Instrumentation?

Process instrumentation covers every sensor, transmitter, gauge, and controller that keeps an oil and gas plant running within safe operating limits. In practice, the instrumentation engineer’s job boils down to one question: “What is the process doing right now, and is it what we want?”

Process Instrumentation

Process Instrumentation

The hardware breaks into three layers. Sensors and transmitters sit on the pipe, vessel, or tank and measure pressure, temperature, flow, and level. Control valves and actuators adjust process conditions based on commands from the control system. Controllers (DCS or PLC) close the loop: they read the transmitter signals, compare them against setpoints, and drive the valves accordingly.

Why does any of this matter? Four reasons. First, monitoring: you cannot control what you cannot see, and a refinery has thousands of process variables running simultaneously. Second, control: automatic regulation of flow rates, pressures, and temperatures keeps product on-spec and throughput high. Third, safety: instrumentation detects abnormal conditions (gas leaks, overpressure, overheating) and triggers alarms or emergency shutdowns before people get hurt. Fourth, compliance: environmental regulators require continuous monitoring of emissions and effluents, and the data has to be traceable.

Key Takeaway: Process instrumentation in oil and gas encompasses sensors, transmitters, and control devices that measure pressure, temperature, flow, level, and analytical parameters. Communication relies on standardized signals; 4-20 mA analog, HART hybrid, and digital bus protocols (Fieldbus, Profibus); with proper calibration, metrological confirmation, and measurement uncertainty management being required for safety and regulatory compliance.

Types of Process Instruments

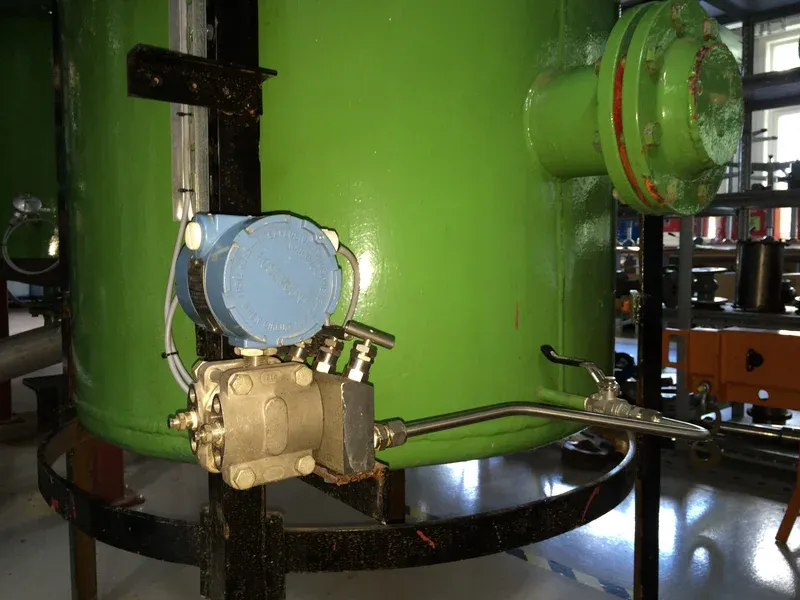

1. Pressure Instruments (Transmitters, Gauges)

Differential pressure sensor

Differential pressure sensor

Pressure Transmitters

A pressure transmitter converts the mechanical force of a gas or liquid into a standard electrical signal (typically 4-20 mA or 0-10 VDC) that the control system can read. The sensor element (usually a piezoresistive diaphragm or a capacitive cell) deflects under pressure, and onboard electronics linearize and temperature-compensate the output.

| Feature | Typical Specification |

|---|---|

| Accuracy | 0.04 % to 0.1 % of span (high-end models) |

| Output | 4-20 mA, 0-10 VDC, HART, Fieldbus |

| Process connections | Threaded (1/2” NPT), flanged, direct-mount |

| Pressure range | Vacuum to 10,000+ bar depending on model |

Pressure transmitters are the workhorses of any plant: pipeline and vessel monitoring, pump control, and overpressure safety systems all depend on them.

Pressure Gauges

Pressure gauges are the mechanical counterpart: a Bourdon tube or diaphragm drives a needle across a calibrated dial. No power supply, no signal conditioning, no configuration software. An operator walks up, reads the gauge, and knows where the pressure sits. That simplicity is exactly why every well-instrumented plant still has hundreds of them: they serve as local indication and as a cross-check against the transmitter reading. They are also the first instrument maintenance technicians consult during troubleshooting.

Gauges are available in psi, bar, kPa, and other units, with options for liquid-filled cases (to dampen vibration and pulsation), diaphragm seals (for corrosive media), and safety patterns (solid back and blow-out back for operator protection).

2. Temperature Instruments

Temperature trasnmitter

Temperature trasnmitter

Temperature Transmitters

A temperature transmitter sits in the connection head of a thermowell assembly and converts the low-level millivolt or resistance signal from the sensor into a reliable 4-20 mA output. Without a transmitter, the weak sensor signal would pick up electrical noise on long cable runs, a real headache on a plant where cable trays run next to high-voltage motor feeds. Modern transmitters also support HART, Foundation Fieldbus, or Profibus, which means you can interrogate diagnostics and re-range the device from the control room without sending someone into the field.

Thermocouples vs. RTDs

The two workhorse sensor technologies in process plants are thermocouples and RTDs, and choosing the wrong one for the application is a common engineering mistake.

| Parameter | Thermocouple | RTD (Pt100 / Pt1000) |

|---|---|---|

| Operating principle | Seebeck effect (voltage from dissimilar metals) | Resistance change of platinum wire |

| Temperature range | -200 °C to +1800 °C (Type K/N/S/R/B) | -200 °C to +850 °C |

| Accuracy | ± 1.5 °C typical (Class 1, Type K) | ± 0.3 °C typical (Class A, Pt100) |

| Long-term stability | Drifts over time, especially at high temps | Excellent; platinum is very stable |

| Response time | Fast (small junction mass) | Slower (larger sensing element) |

| Cost | Lower sensor cost | Higher, but lower lifecycle cost at moderate temps |

The rule of thumb most instrument engineers follow: use RTDs below 500 °C where accuracy matters, and reserve thermocouples for high-temperature service (fired heaters, flue gas stacks, reactor internals) or where fast response is critical. Types K, J, T, and E cover most oil and gas applications; Types S, R, and B handle the extreme temperatures found in metallurgical or glass processes.

3. Flow Instruments

Vortex Flow Meters

Vortex Flow Meters

Flow instruments fall into two categories: flow meters that continuously measure volume or mass flow rate, and flow switches that trigger an action when flow crosses a threshold.

Flow Meters (Coriolis, Ultrasonic, Turbine, etc.)

Flow meters are arguably the most technology-diverse instrument category. The choice of meter depends heavily on the fluid (clean liquid, dirty slurry, gas, steam, multiphase), the required accuracy, and the allowable pressure drop. Coriolis meters give you mass flow and density in one device, hard to beat for custody transfer. Ultrasonic meters are non-intrusive and have no moving parts. Turbine meters are simple and accurate on clean fluids but wear out fast on anything abrasive.

Learn more about flowmeters for the oil & gas industry.

Flow Switches

Flow switches are on/off devices. When flow drops below (or rises above) a preset threshold, the switch changes state and triggers an alarm, starts a backup pump, or initiates a safety shutdown. They are simple, cheap, and extremely common in pump protection circuits. A flow switch on the cooling water line prevents a pump from running dry and burning out its mechanical seal.

4. Level Instruments

Industrial Level Transmitter

Industrial Level Transmitter

Level measurement splits into three tiers: continuous transmitters for the DCS, local gauges for operator rounds, and switches for alarm/trip functions.

Level Transmitters

| Technology | Principle | Best suited for |

|---|---|---|

| Radar (guided wave or non-contact) | Microwave pulse time-of-flight | Hydrocarbon tanks, high-pressure vessels, foaming liquids |

| Ultrasonic | Sound pulse time-of-flight | Clean liquids, open tanks, water/wastewater |

| Hydrostatic (DP) | Pressure of liquid column | Simple liquid services, well-understood and inexpensive |

| Capacitance | Dielectric change between probes | Interface measurement, solids level in silos |

Radar level transmitters have largely taken over from DP-based level measurement on new projects because they are unaffected by density changes, temperature swings, or gas blankets above the liquid surface. That said, DP level transmitters still outnumber everything else in brownfield plants, and they work perfectly well when the fluid density is stable and the impulse lines are properly maintained.

Level Gauges

Level gauges provide direct visual indication on the vessel itself. Glass tube gauges let you see the liquid directly; magnetic level gauges use a float inside a chamber that flips coloured flags on an external scale (no glass to break, so they are preferred in high-pressure or hazardous service). Float-operated gauges connect a float to a mechanical pointer and are common on atmospheric tanks.

Float Switches

Float switches are the level equivalent of flow switches: simple on/off devices that trigger pump start/stop or high/low level alarms. A float rises with the liquid, actuates a magnetic or mechanical contact, and changes the switch state. No power required for the switching action itself, which makes them inherently reliable.

5. Analytical Instruments

Portable Multi-Gas Detector

Portable Multi-Gas Detector

Analytical instruments tell you what is in the process stream, not just how much of it there is. They tend to be the most maintenance-intensive instruments in the plant: electrodes foul, sample lines plug, and calibration gas bottles run out at the worst possible time. Budget accordingly.

Gas Analyzers

Gas analyzers detect and quantify components of a gas mixture. The three main sensing technologies are infrared (IR) absorption, mass spectrometry, and electrochemical cells. IR analyzers are the most common for continuous emission monitoring. Mass spectrometers handle multicomponent analysis. Electrochemical sensors are compact and inexpensive; you will find them in portable detectors and fixed-point monitors alike.

pH Meters and Conductivity Sensors

pH meters use a glass electrode to measure hydrogen ion activity. They require automatic temperature compensation (pH is temperature-dependent) and frequent calibration, as a dirty or aged electrode drifts fast. Conductivity sensors measure the ionic concentration of a solution by passing a voltage between two electrodes and reading the resulting current. Both instruments are critical in water treatment, chemical dosing, and effluent monitoring.

Flammable Gas Detectors

Gas detectors prevent fires and explosions by sensing flammable gas concentrations well before they reach the lower explosive limit (LEL). Catalytic bead sensors are the traditional choice: they oxidize a gas sample on a heated element and measure the heat generated. Infrared point detectors are gaining ground because they do not consume the gas and are not poisoned by silicones or lead compounds, a real problem with catalytic sensors in certain environments.

Learn more about devices to monitor the UEL/LEL in the presence of flammable gases.

6. Control Valves

Control valves are special types of valves used to regulate the flow of oil, gas, and other substances based on signals from control systems, adjusting process conditions as needed.

Learn more about control valves for oil & gas processing plants.

7. Safety Instruments

Gas detectors monitor for hazardous leaks and provide early warning before concentrations reach dangerous levels. Emergency Shutdown Systems (ESD) automatically trip processes or equipment when predefined hazardous conditions are detected (overpressure, high level, loss of containment), protecting both personnel and the facility.

8. Data Acquisition and Signal Conditioning

SCADA systems (Supervisory Control and Data Acquisition) collect field data for centralized monitoring and control. Signal converters and isolators condition the raw field signals so they can be transmitted cleanly to the DCS or PLC without ground loops or electrical noise corrupting the reading.

Control Signals: Analog, Hybrid, Digital

How instruments communicate with the control system is just as important as what they measure. Three signal families dominate the industry.

| Signal Type | Technology | Key Advantage |

|---|---|---|

| Analog | 4-20 mA current loop, 0-10V | Simple, well-understood, wide equipment compatibility |

| Hybrid (HART) | Digital overlay on 4-20 mA | Compatible with existing infrastructure; simultaneous analog + digital |

| Digital (Bus) | Fieldbus, Profibus, Modbus | Higher accuracy, noise immunity, diagnostics and remote configuration |

The paragraphs below describe the standardized analog pneumatic signals (20 to 100 kPa) and electrical signals (4 to 20 mA), as well as the innovative analog and digital hybrid signals HART (Highway Addressable Remote Transducer) and the state-of-the-art current digital communication protocols commonly called BUS.

Analog Control Signals

The 4-20 mA current loop remains the backbone of field instrumentation. The signal is linear: 4 mA represents 0 % of the measured range, 20 mA represents 100 %. The “live zero” at 4 mA (rather than 0 mA) serves two purposes: it powers the loop-powered transmitter, and it allows the control system to distinguish a failed or disconnected instrument (0 mA) from a legitimate low reading. Voltage signals (0-10 VDC) are common inside the control room but rarely used in the field because they are susceptible to voltage drop and noise over long cable runs.

The traditional standardized transmission signals are:

- Direct current signals (Table 1): for connection between instruments on long distances (i.e. in the field area)

- Direct voltage signals (Table 2): for connection between instruments on short distances (i.e. in the control room)

| LOWER LIMIT (mA) | UPPER LIMIT (mA) |

|---|---|

| 40 | 2020 |

| (1) Preferential signal |

Table 1- Standardized signals in direct current (IEC 60381-1)

| LOWER LIMIT(V) | UPPER LIMIT(V) | NOTE |

|---|---|---|

| 1- 10 | 5510+ 10 | (1)(1)(1)(2) |

| (1) Voltage signals that can be derived directly from normalized current signals(2) Voltage signals that can represent physical quantities of a bipolar nature |

Table 2- Standardized signals in direct voltage (IEC 60381-2)

Current signals are used for field instrumentation; voltage signals are used in the technical and control room. The current signal has the advantage of being unaffected by cable length and impedance, up to the resistance limits shown in Figure 1.

Limit supply region analog signalsFigure 1 - Example of the limit of the operating region for the field instrumentation in terms of its connection resistance Ω to the supply voltage V

Limit supply region analog signalsFigure 1 - Example of the limit of the operating region for the field instrumentation in terms of its connection resistance Ω to the supply voltage V

Key:

- Vdc = Actual supply voltage in volt

- Vmax= Maximum supply voltage, 30 V in this example

- Vmin= Minimum supply voltage, 10 V in this example

- RL= Max. load resistance in ohm at the actual supply voltage:

- RL <= (Vdc - 10) / 0,02 (in the Example reported in Figure 1)

Hybrid Control Signals (HART)

The HART protocol (Highway Addressable Remote Transducer) is the most widely deployed hybrid signal in process plants. It overlays a frequency-shift-keyed (FSK) digital signal on top of the standard 4-20 mA current loop using Bell 202 modulation at +/- 0.5 mA. The analog signal carries the process variable in real time, while the digital layer carries configuration data, diagnostics, and secondary variables. Because the FSK signal averages to zero, it does not disturb the analog reading.

The biggest practical advantage: HART works on existing 4-20 mA wiring. Plants can upgrade instruments to smart transmitters without pulling new cable, a major cost saving on brownfield projects.

NOTE: Remember that to operate the HART protocol requires a resistance of 250 ohms in the output circuit!

Table 3 - HART protocol with signals standardized BELL 202

Table 3 - HART protocol with signals standardized BELL 202

Digital Control Signals (Bus Protocols)

Fully digital fieldbus protocols transmit process data in binary format, providing higher accuracy, noise immunity, and built-in diagnostics that analog signals cannot match. Foundation Fieldbus, Profibus, and Modbus are the most common in oil and gas. The standardization of digital bus signals was formalized in the late 1990s with IEC 61158, though adoption has been slower than expected, partly because the standard encompasses eight distinct protocols. Each protocol is defined by specific characteristics (Table 4):

- Transmission encoding: Preamble, frame start, transmission of the frame, end of the frame, transmission parity, etc.

- Access to the network: Probabilistic, deterministic, etc.

- Network management: Master-Slave, Producer-Consumer, etc.

| PROTOCOLIEC61158 | PROTOCOLNAME | NOTE |

|---|---|---|

| 12345678 | Standard IECControlNetProfibusP-NetFieldbus FoundationSwiftNetWorldFipInterBus | (1) |

| (1) Protocol initially designed as unique standard protocol IEC |

Table 4 - Standardized protocols provided for by the International Standard IEC 61158

Figure 2 shows the signal path from field to control room. Signals originate at the field instrument, travel through the technical room (where marshaling and current-to-voltage conversion take place), feed into the DCS (Distributed Control System), and finally reach the operator station and HMI (Human Machine Interface) in the control room.

Figure 2 - The typical path of a measurement chain from the field to the control room

Figure 2 - The typical path of a measurement chain from the field to the control room

Power Supply for Process Instruments

Picking the right power supply is not glamorous, but get it wrong and you have instruments that brown out, drift, or simply go dark. The choice depends on the instrument’s power draw, the cable distance, and the hazardous area classification.

| Power Supply Type | Specifications | Typical Applications |

|---|---|---|

| DC Power (24V DC) | Most common for process instrumentation | Sensors, transmitters; long-distance powering with minimal voltage drop |

| DC Battery-powered | Wireless/remote instruments | Data loggers, remote sensors, portable instruments |

| AC Power (110-240V) | Heavy-duty/high-power instruments | Control room equipment, pumps, motors, machinery |

| Loop-powered | Derives power from 4-20 mA control loop | Remote/hazardous location transmitters; simplifies wiring |

| Intrinsically Safe (IS) | Limits energy to prevent ignition | Hazardous areas with flammable gases or dust |

| Solar-powered | Solar panels charging batteries | Remote monitoring, wellhead automation, outdoor applications |

| Energy Harvesting | Vibration, thermal, or light sources | Emerging technology for self-sustaining instruments |

Standard Power and Signal Specifications

In summary:

- For pneumatic instrumentation: 140 ± 10 kPa (1.4 ± 0.1 bar) for the pneumatic instrumentation (sometimes the normalized pneumatic power supply in English units is still used: 20 psi, corresponding to ≈ 1.4 bar)

- For electrical instrumentation: Continuous voltage: 24 V dc for field instrumentation, Alternating voltage: 220 V ac for control and technical room instrumentation

The connection and transmission signals between the various instruments in the measuring and regulating chains are standardized by the IEC (International Electrotechnical Commission):

- Pneumatic signals (IEC 60382): 20 to 100 kPa (0.2 to 1.0 bar) (sometimes the standardized signal is still in English units: 3 to 15 psi, ≈ 0.21 to 1.03 bar)

- Electrical signals (IEC 60382)

Attributes for Process Instrumentation

This section covers the metrological foundations every instrument engineer should understand: precision, uncertainty, metrological confirmation, and calibration. These are not academic concepts; they directly affect whether your measurement data is reliable enough to base process and safety decisions on.

Measurement Precision

Precision and accuracy are different things, and confusing them leads to bad instrument selections. Precision (repeatability) is how closely repeated readings agree with each other. Accuracy is how close those readings are to the true value. An instrument can be precise but inaccurate; it gives the same wrong answer every time. For process control, precision actually matters more in many loops: if the offset from the true value is constant and known, the controller can compensate. But you still need periodic calibration to confirm that the offset has not drifted.

The concept of measurement precision is defined in various ways by different entities involved in the specification of process instruments:

- according to ISO-IMV (International Metrology Vocabulary): “…closeness of agreement between indications or measured quantity values obtained by replicate measurements on the same or similar objects under specified conditions”;

- according to IEC-IEV (International Electrotechnical Vocabulary): “..quality which characterizes the ability of a measuring instrument to provide an indicated value close to a true value of the measurand” (Note: call in this case, however, not Precision but Accuracy);

- or we could deduce the following practical definition from the previous ones:“…by testing a measuring instrument under conditions and with specified procedures, the maximum positive and negative deviations from a specified characteristic curve (usually a straight line)”.

Therefore, the concept of linearity is also inherent in the measurement precision term (which is currently very limited in the digital instrumentation), while the concept of hysteresis is not included (although this is considered, as it is included within the maximum positive and negative deviations found).

Instruments accuracy and precisionFurthermore, the concept of repeatability of the measurement is not included (which is instead considered in the case of verification of precision over several measuring cycles.

Instruments accuracy and precisionFurthermore, the concept of repeatability of the measurement is not included (which is instead considered in the case of verification of precision over several measuring cycles.

Therefore, in the practical verification of the precision of the measuring instruments with a single up and down measurement cycle (generally conducted for instruments with hysteresis, such as pressure gauges, pressure transducers, load cells, etc.) a calibration curve is obtained of the type found in Figure 1, where we can deduce the concept of tested accuracy (accuracy measured) that must be included within the so-called nominal accuracy (accuracy rated) or the limits within which the imprecision of an instrument is guaranteed by its specification.

The metrological confirmation is the verification that the measuring instrument keeps the accuracy and uncertainty characteristics required by the measurement process over time.

Sometimes this concept of imprecision for some common types of instruments (such as gauges, resistance thermometers, thermocouples, etc.) is also called precision or accuracy class, which according to the International Reference Vocabularies ISO-IMV and IEC-IEV: “class of measuring instruments or measuring systems that meet stated metrological requirements that are intended to keep measurement errors or instrumental measurement uncertainties within specified limits under specified operating conditions” (ie, the accuracy measured must be less than accuracy rated: See also Figure 1).

Instrument Measurement Accuracy

Figure 1 - Exemplification of measurement accuracy concepts

Instrument Measurement Accuracy

Figure 1 - Exemplification of measurement accuracy concepts

Measurement Uncertainty

Every measurement carries doubt. Measurement uncertainty quantifies that doubt: it defines a range within which the true value is expected to lie, at a stated confidence level. Unlike a single error value that can be corrected, uncertainty acknowledges the combined effect of all error sources: inherent instrument limitations, environmental factors (temperature, humidity, vibration), operator influence, and the uncertainty of the reference standard used during calibration.

Understanding uncertainty matters for practical reasons: if your pressure transmitter has an uncertainty of ±0.5 bar and your safety trip setpoint is only 1 bar below the design pressure, your safety margin may be thinner than you think.

Instruments measurement uncertaintyThe concept of measurement uncertainty is defined in various ways by different entities involved in the specification of process instruments:

Instruments measurement uncertaintyThe concept of measurement uncertainty is defined in various ways by different entities involved in the specification of process instruments:

- according to ISO-IMV (Internat. Metrology Vocabulary):_ “non-negative parameter characterizing the dispersion of the quantity values being attributed to a measurand, based on the information used”;_

- according to ISO-GUM (Guide to Uncertainty of the Measurement): “result of the estimation that determines the amplitude of the field within which the true value of a measurand must lie, generally with a given probability, that is, with a determined level of confidence”.

From the above definitions, we can deduce two fundamental concepts of measurement uncertainty:

-

Uncertainty is the result of an estimate, which is evaluated according to the following two types:

-

**Category A: **when the evaluation is done by statistical methods, that is through a series of repeated observations, or measurements.

-

**Category B: **when the evaluation is done using methods other than statistical, that is, data that can be found in manuals, catalogs, specifications, etc.

- The uncertainty of the estimate must be given with a certain probability, which is normally provided in the three following expressions (see also Table 1):

- **Standard uncertainty (u): **at the probability or confidence level of 68% (exactly 68.27%).

- **Combined uncertainty (uc): **the standard uncertainty of measurement when the result of the estimate is obtained using the values of different quantities and corresponds to the summing in quadrature of the standard uncertainties of the various quantities relating to the measurement process.

- **Expanded uncertainty (U): **uncertainty at the 95% probability or confidence level (exactly 95.45%), or 2 standard deviations, assuming a normal or Gaussian probability distribution.

Table 1- Main terms & definitions related to measurement uncertainty according to ISO-GUM

Standard uncertainty u(x) (a)

The uncertainty of the result of measurement expressed as a standard deviation u(x) º s(x)

Type A evaluation (of uncertainty)

Method of evaluation of uncertainty by the statistical analysis of a series of observations

Type B evaluation (of uncertainty)

Method of evaluation of uncertainty by means other than the statistical analysis of a series of observations

Combined standard uncertainty uc(x)

The standard uncertainty of the result of measurement when that result is obtained from the values of several other quantities, equal to the positive square root of a sum of terms, the terms being the variances or covariances of these other quantities weighted according to how the measurement result varies with changes in these quantities

Coverage factor k

The numerical factor is used as a multiplier of the combined standard uncertainty to obtain an expanded uncertainty (normally is 2 for probability @ 95% and 3 for probability @ 99%)

**Expanded uncertainty **U(y) = k . uc(y) (b)

Quantity defining an interval about the result of a measurement that may be expected to encompass a large fraction of the distribution of values that could reasonably be attributed to the measurand (normally obtained by the combined standard uncertainty multiplied by a coverage factor k = 2, namely with the coverage probability of 95%)

(a) The standard uncertainty u (y), ie the mean square deviation s (x), if not detected experimentally by a normal or Gaussian distribution, can be calculated using the following relationships:

u(x) = a/Ö3, for rectangular distributions, with an amplitude of variation ± a, eg Indication errors

u(x) = a/Ö6, for triangular distributions, with an amplitude of variation ± a, eg Interpolation errors

(b) The expanded measurement uncertainty U (y) unless otherwise specified, is to be understood as provided or calculated from the uncertainty composed with a coverage factor 2, ie with a 95% probability level.

Metrological Confirmation

Metrological confirmation is the formal verification that an instrument still meets the accuracy and uncertainty requirements of the measurement process it serves. It is not just calibration; it includes calibration, any necessary adjustment, comparison against the metrological requirements for the intended use, and labelling of the outcome.

By metrological confirmation, we mean according to ISO 10012 (Measurement Management System): a _“set of interrelated or interacting elements necessary to achieve metrological confirmation and continual control of measurement processes”, and generally includes: _

- instrument calibration and verification;

- any necessary adjustment and the consequent new calibration;

- the comparison with the metrological requirements for the intended use of the equipment;

- the labeling of successful positive metrological confirmation.

Metrological confirmation for process instrumentation

Metrological confirmation for process instrumentation

The metrological confirmation must be managed through a formal measurement management system. Table 1 outlines the phases.

| NORMAL PHASES | PHASES IN CASE OF ADJUSTMENT | PHASES IN CASE OF IMPOSSIBLE ADJUSTMENT |

|---|---|---|

| 0. Equipment scheduling | ||

| 1. Identification need for calibration | ||

| 2. Equipment calibration | ||

| 3. Drafting of calibration document | ||

| 4. Calibration identification | ||

| 5. There are metrological requirements? | ||

| 6. Compliance with metrological req. | 6a. Adjustment or repair | 6b. Adjustment Impossible |

| 7. Drafting document confirms | 7a. Review intervals confirm | 7b. Negative verification |

| 8. Confirmation status identification | 8a. Recalibration phase (2 to 8) | 8b. State of identification |

| 9. Satisfied need | 9a. Satisfied need | 9b. Need not satisfied |

Table 1 - Main phases of the metrological confirmation (ISO 10012)

Table 1 shows three possible paths. The normal path (left column) completes successfully without adjustment. If adjustment or repair is needed and the subsequent recalibration passes (middle column), the confirmation interval should be shortened. If adjustment fails or is impossible (right column), the instrument must be downgraded or removed from service.

Metrological confirmation can be fulfilled in two ways:

- Comparing the Maximum Relieved Error (MRE) with the Maximum Tolerated Error (MTE), ie: MRE <= MTE

- Comparing the Max. Relieved Uncertainty (MRU) with Tolerated Uncertainty (MTU, ie: MRU <= MTU

As a practical example, consider a manometer with the following calibration results:

- MRE: ±05 bar

- MRU: 066 bar

If the maximum tolerated error and tolerated uncertainty are both 0.05 bar, the manometer passes on an MRE basis (0.05 <= 0.05) but fails on an MRU basis (0.066 > 0.05). It would therefore follow path 2 or path 3 of Table 1: adjustment, recalibration, or downgrading.

Instruments Calibration

Calibration establishes the relationship between the known values of a reference standard and the corresponding readings of the instrument under test, under specified conditions.

Formally:

- according to ISO-IMV (International Metrology Vocabulary): “operation that, under specified conditions, in a first step, establishes a relation between the quantity values with measurement uncertainties provided by measurement standards and corresponding indications with associated measurement uncertainties and, in a second step, uses this information to establish a relation for obtaining a measurement result from an indication”;

- or we could deduce the following practical example from the previous one: “operation performed to establish a relationship between the measured quantity and the corresponding output values of an instrument under specified conditions”.

Instrument calibrationCalibration should not be confused with adjustment, which means: “set of operations carried out on a measuring system so that it provides prescribed indications corresponding to given values of a quantity to be measured (ISO-IMV).

Instrument calibrationCalibration should not be confused with adjustment, which means: “set of operations carried out on a measuring system so that it provides prescribed indications corresponding to given values of a quantity to be measured (ISO-IMV).

Hence, the adjustment is typically the preliminary operation before the calibration, or the next operation when a de-calibration of the measuring instrument is found.

Calibration should be performed on 3 or 5 equidistant measuring points for increasing (and decreasing) values in the case of instruments with hysteresis phenomena: eg manometers):

Figure 1 presents the calibration setup, while Table 1 presents the calibration results.

Manometer setup calibrationFigure 1 - Calibration setup of a manometer

Manometer setup calibrationFigure 1 - Calibration setup of a manometer

| Pressureofreference (bar) | Relieved Values | Relieved Errors | MaxRelievedError (bar) | | ------------------------- | --------------- | --------------- | ---------------------- | ---- | ---- | | Up (bar) | Down (bar) | Up(bar) | Down (bar) | | 0 | - | 0.05 | - | 0.05 | 0.05 | | 2 | 1.95 | 2.05 | - 0.05 | 0.05 | | 4 | 3.95 | 4.05 | - 0.05 | 0.05 | | 6 | 5.95 | 6.00 | - 0.05 | 0.00 | | 8 | 7.95 | 8.00 | - 0.05 | 0.00 | | 10 | 10.00 | - | 0.00 | - |

Table 1 - Calibration ResultsFrom the calibration results shown in Table 1, the metrological characteristics of the manometer (or pressure gauge) can be obtained in terms of:

- **Measurement Accuracy: **that is, maximum positive and negative error: ± 0.05 bar

- **Measurement Uncertainty: **or instrumental uncertainty that takes into account the various factors related to the calibration, namely:

Iref Uncertainty of the reference standard 0.01 bar (supposed)

Emax Max error of measurement relieved 0.05 bar

Eres Error of resolution of the manometer 0.05 bar

from which the composed uncertainty uc can be derived from the following relation:

and then the extended uncertainty (U), at a 95% confidence level (ie at 2 standard deviations):

**![]() **

NOTE:

Obviously, the measurement uncertainty of the manometer (usually called instrumental uncertainty) is always higher than the measurement accuracy (because it also takes into account the error of resolution of the instrument in calibration and the uncertainty of the reference standard used in the calibration process).

**

NOTE:

Obviously, the measurement uncertainty of the manometer (usually called instrumental uncertainty) is always higher than the measurement accuracy (because it also takes into account the error of resolution of the instrument in calibration and the uncertainty of the reference standard used in the calibration process).

PDF - Traceability & Calibration Handbook (Prof. Brunelli)

Traceability & Calibration Handbook (Process Instrumentation)

Book - Instrumentation Manual (Prof. Brunelli)

Buy the “Instrumentation Manual” on Amazon.

The book illustrates:

- Part 1: illustrates the general concepts of industrial instrumentation, the symbology, the terminology and calibration of the measurement instrumentation, the functional and applicative conditions of the instrumentation in normal applications and with the danger of explosion, as well as the main directives (ATEX, EMC, LVD, MID, and PED);

- Part 2: this part of the book deals with the instrumentation for measuring physical quantities: pressure, level, flow rate, temperature, humidity, viscosity, mass density, force and vibration, and chemical quantities: pH, redox, conductivity, turbidity, explosiveness, gas chromatography, and spectrography, treating the measurement principles, the reference standard, the practical executions, and the application advantages and disadvantages for each size;

- Part 3: illustrates the control, regulation, and safety valves and then simple regulation techniques in feedback and coordinates in feedforward, ratio, cascade, override, split range, gap control, variable decoupling, and then the Systems of Distributed Control (DCS) for continuous processes, Programmable Logic Controllers (PLC) for discontinuous processes and Communication Protocols (BUS), and finally the aspects relating to System Safety Systems, from Operational Alarms to Fire & Gas Systems, to systems of ESD stop and finally to the Instrumented Safety Systems (SIS) with graphic and analytical determinations of the Safety Integrity Levels (SIL) with some practical examples.

Leave a Comment

Have a question or feedback? Send us a message.

Previous Comments

NCQC Laboratory LLP specialises in calibration services for a wide range of instruments, including pressure gauges, flow meters, thermometers, mass standards, pH meters, and more. With NABL accreditation, they ensure precise calibration for various industries, offering both in-lab and on-site services.